About Me

I am an undergraduate at MIT Class of 2028, double-majoring in Physics (Course 8) and Artificial Intelligence & Decision Making (Course 6-4). I am currently an undergraduate researcher in Professor Kaiming He’s group, working at computer vision and generative modeling. My current interests focus on one-step diffusion algorithms, text-to-image generation, and multimodal models.

I have worked on diffusion models and one-step image generation methods. More recently, my projects involve text-to-image generation and unified multimodal training. In addition to algorithmic research, I spend significant time on building training infrastructure in the research group, including JAX/TPU workflows, distributed optimization, and managing experiments efficiently.

Before college, I competed in the Physics Olympiad during high school in China and won a gold medal in the 53rd International Physics Olympiad (IPhO). Afterward, I spent a preparatory year at the IIIS of Tsinghua University (a.k.a. the “Yao Class”), where I began my journey into deep learning and artificial intelligence.

Although I am still a beginner, I am eager to explore various opportunities or collaborations. I also enjoy engaging with people who share similar interests and chatting about anything from research ideas to personal experiences. Feel free to reach out if you’d like to connect!

My resume is linked here.

Publications

Selected Projects

-

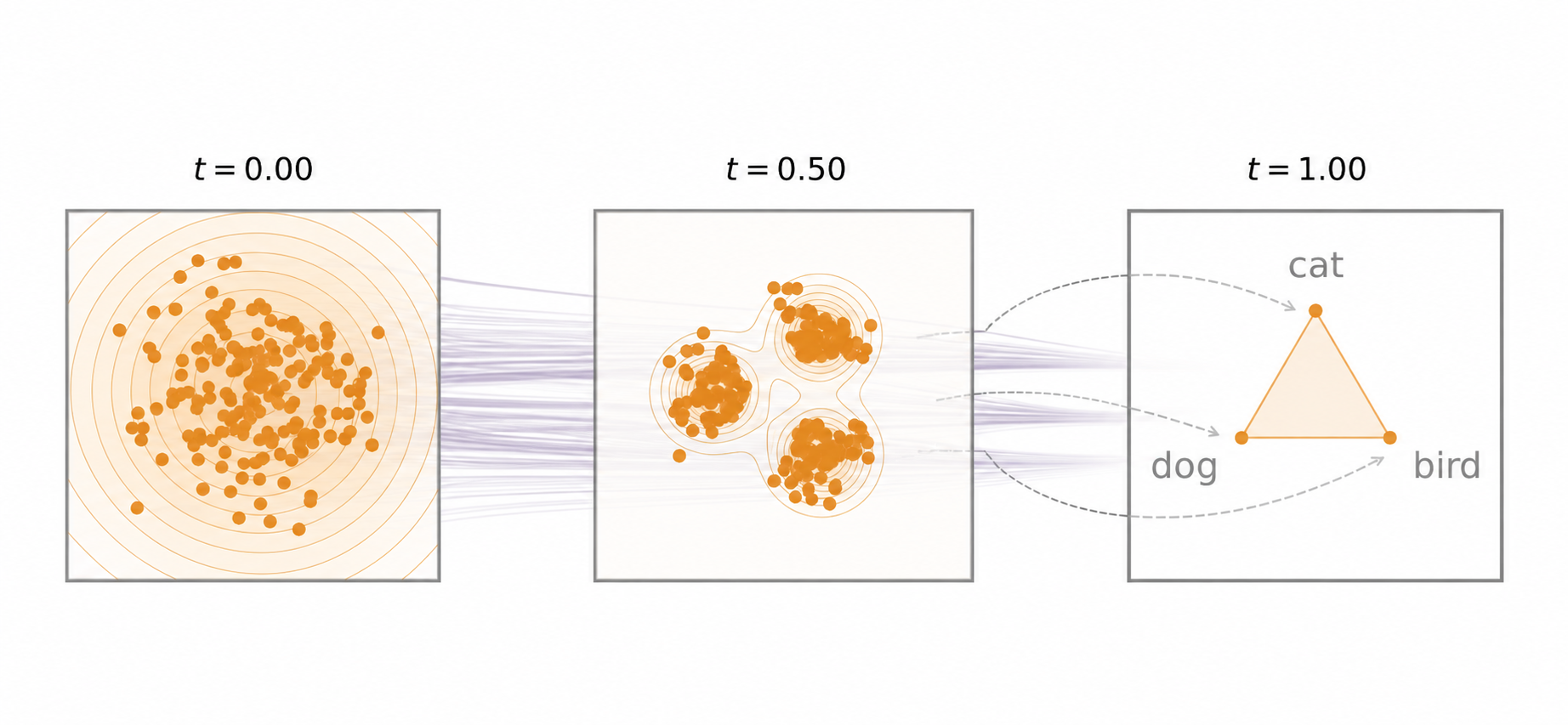

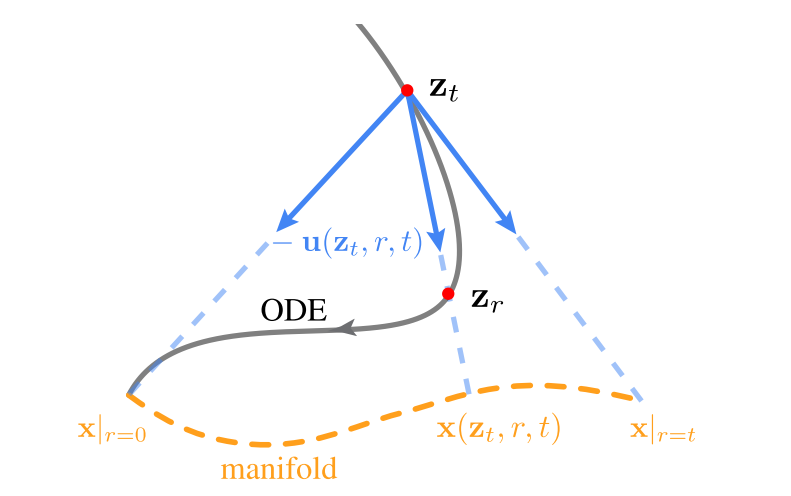

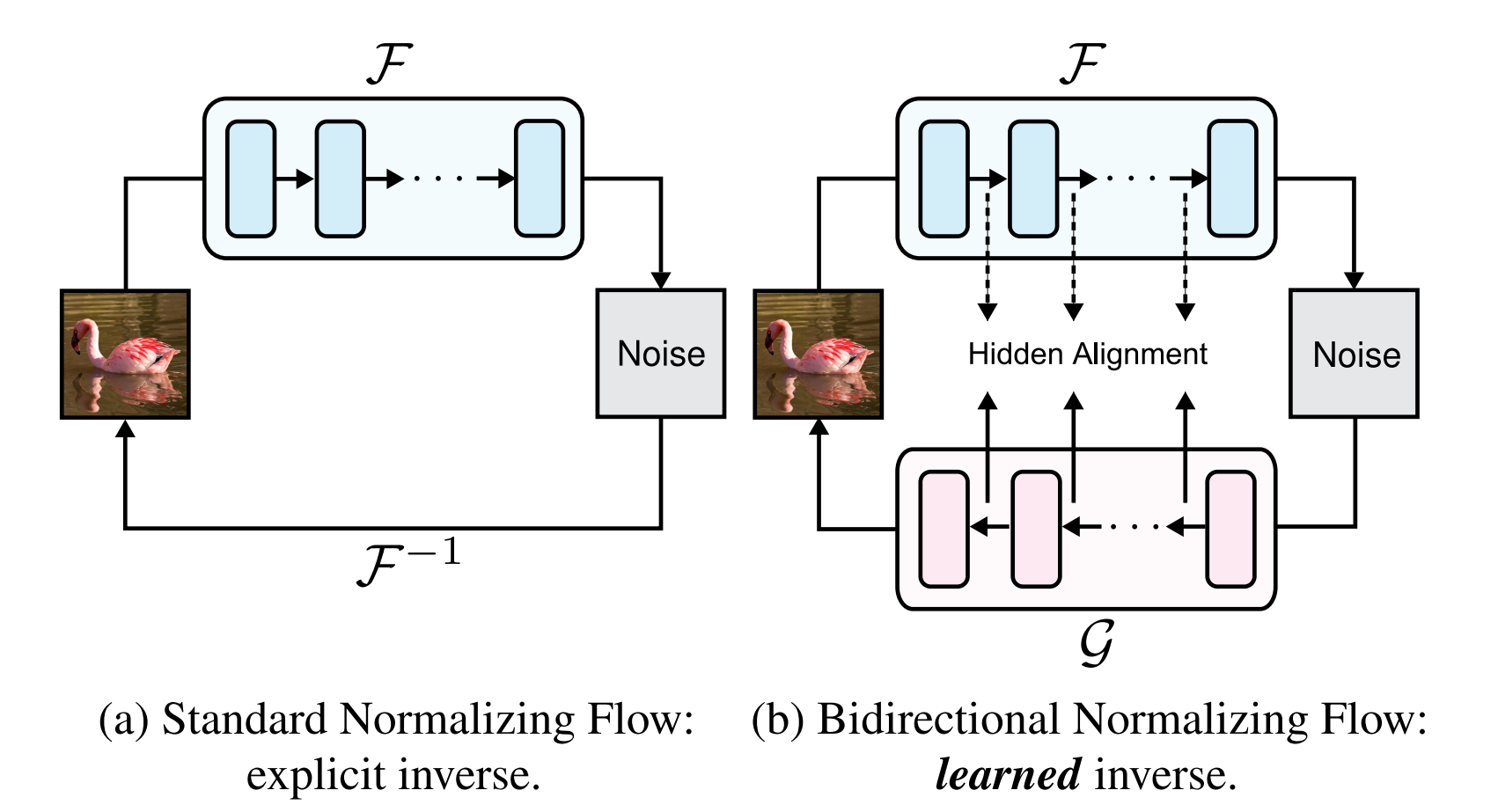

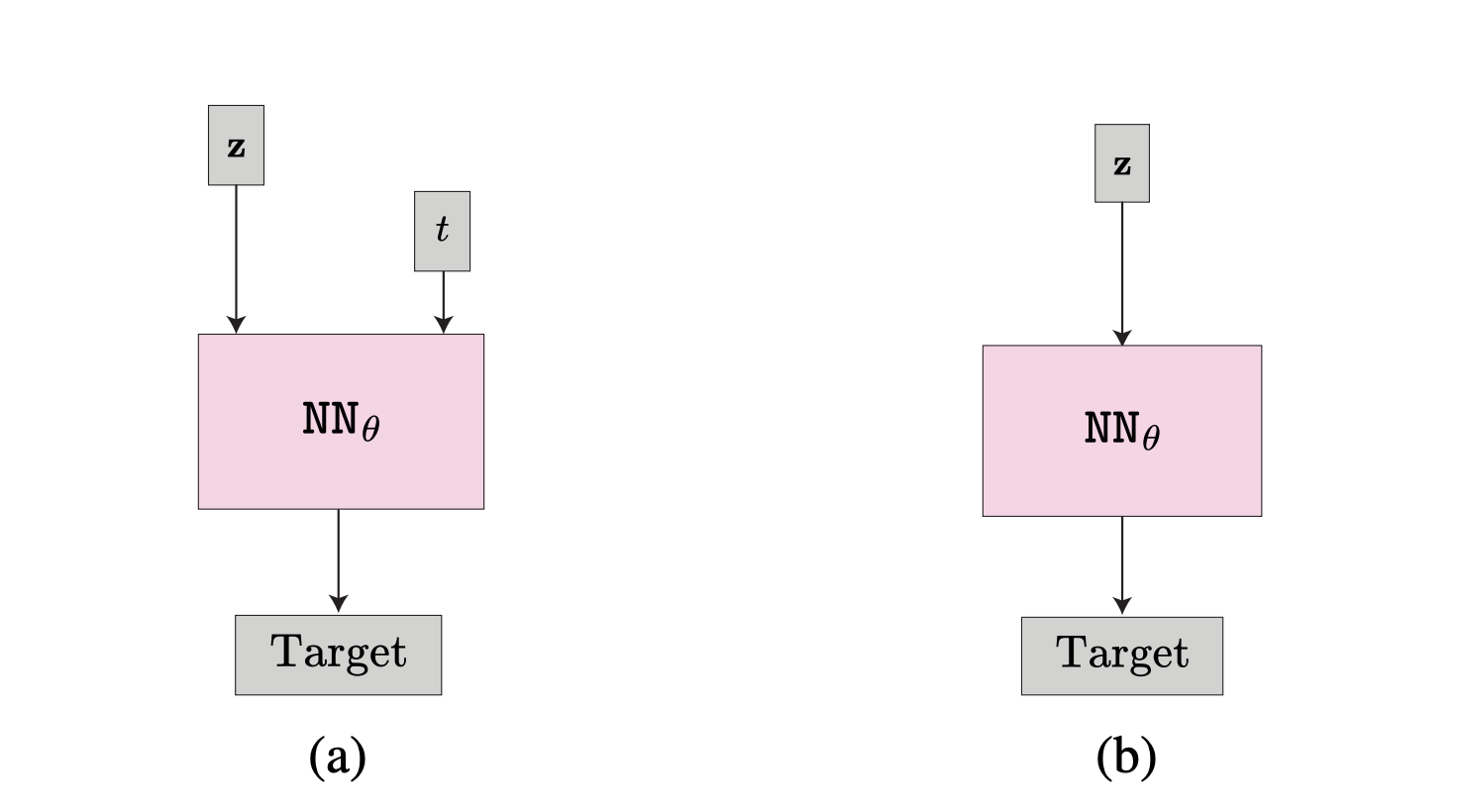

Speeding Up Diffusion Models with One-step Generators

This is the final project for the seminar course 6.S978: Deep Generative Models at MIT. In the project, we proposed a new method to speed up the training of diffusion models by using one-step generators. On toy experiments, this reduces NFE by half while maintaining the sample quality. We also wrote a blog post, explaining the motivation of the experiment from a higher perspective.

-

This is the project for the course Introduction to Large Language Model Application at IIIS, Tsinghua University. In the project, we apply LLMs to answer user questions given a folder containing documents as the context. We developed a tagging system, which make the search efficient even when the number of documents is large. We also support semantic search for multimodal documents, such as images and videos.

-

In the repository, I tried to implement some classic and modern deep learning models from scratch. Instead of using extensive tricks and hyperparameter tuning, I tried to make each model implementation simple and easy to follow while giving reasonable results. I also tried to analyze what tricks are the most necessary for the model to work, so that I can find out the problem more quickly when a new model doesn't work as expected.

The repository is still under construction, and I will keep updating it with more models and analysis.